Marketing teams today are piling on AI and automation. A growth marketer might export a list of high-intent accounts from the CRM and paste it into ChatGPT to draft personalized outreach. A campaign lead might upload performance spreadsheets or call transcripts into an AI tool to analyze what’s working and what needs improvement.

Another marketer might build a Clay or n8n automation to enrich and route leads in real time. These workflows are efficient, turning hours of work into minutes. In fact, some surveys show roughly 88% of marketers already rely on AI tools in their day-to-day roles.

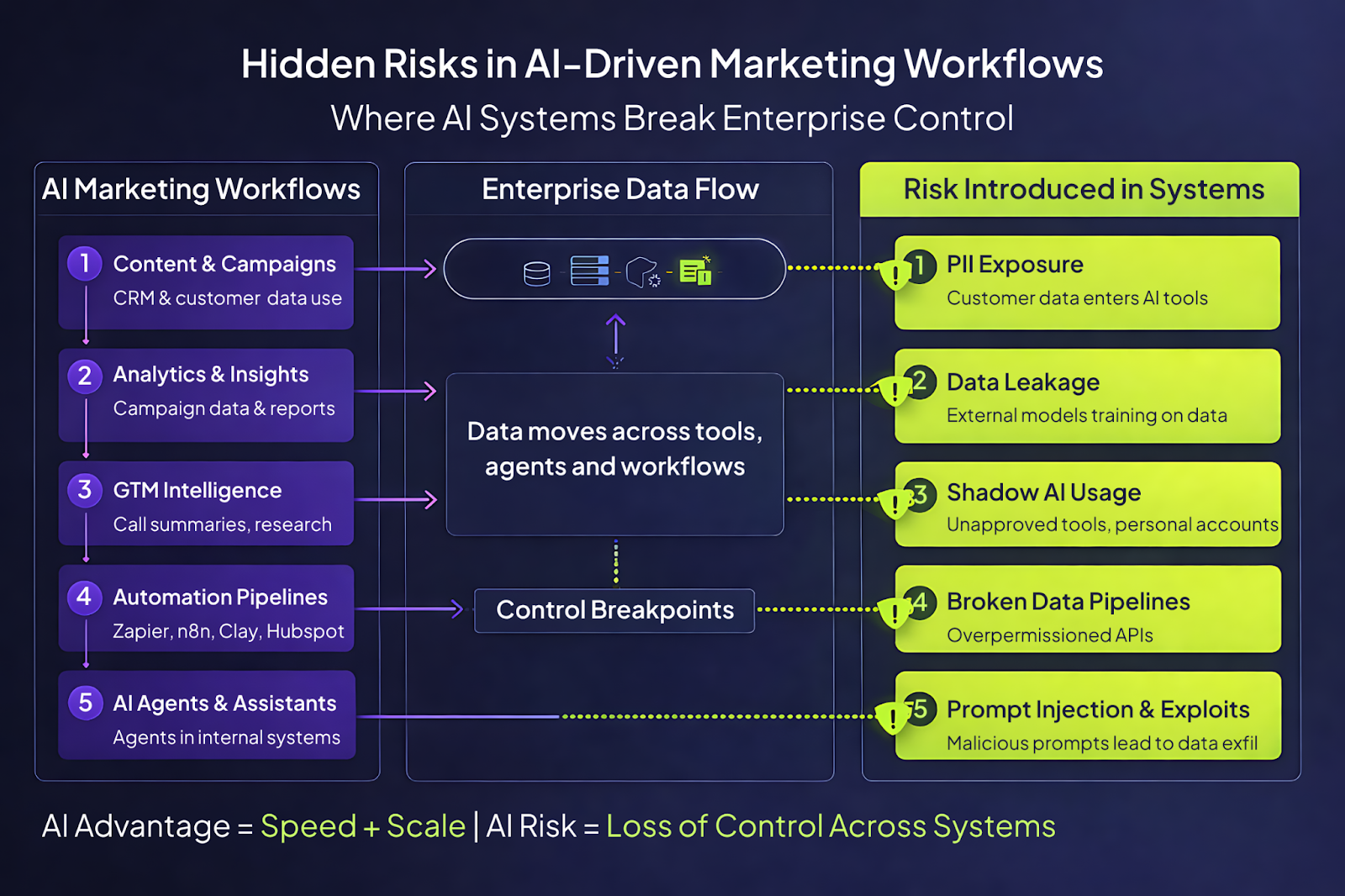

But all of this speed and innovation comes with a hidden cost. Every time sensitive customer data, financial numbers, or internal docs get pushed into a third-party AI or automated pipeline, the organization expands its attack surface. Data that lived in controlled systems like CRMs and internal databases now moves across networks outside IT’s full control. This efficiency introduces a brand-new AI security and governance risk.

Marketing’s New Role and Rising Risk

Marketing isn’t what it used to be. It’s no longer just creative campaigns and brand messaging. Today’s marketing function handles massive amounts of sensitive data, from customer PII (names, emails, firmographics) to behavioral analytics (usage patterns, intent signals), and in industries like healthcare, even PHI. That data is now being processed through AI systems and custom workflows at scale.

Meanwhile, marketing teams thrive on rapid experimentation. New tools (ChatGPT, Claude, Notion AI, Zapier, n8n, etc.) are adopted with low friction. A simple “Let me try this AI tool quickly” mindset drives fast results. But it also means valuable data moves faster and through more hands than ever.

The result: marketing has become one of the most data-exposed functions in many organizations. Teams have a high appetite for “move fast and learn” and often trade processes for speed.

The Artificial Intelligence Index Report 2025 shows that marketing and sales benefit most from AI, with 71% reporting cost reduction or revenue gains.

AI is deeply embedded in marketing’s operations and rightly so. But with every integration and every new workflow, the chance of unintended data leaks grows.

Security experts warn that this gap between rapid AI adoption and governance is a dangerous blind spot.

For example, IBM’s 2025 breach report found that 97% of companies experiencing an AI-related breach had no proper access controls in place. Marketing workflows, by their very nature, often outpace the creation of formal policies.

Many teams copy-paste customer lists into public tools or experiment with new AI features before anyone on IT even knows.

How AI Can Expose Data in Marketing

To make this concrete, here are some specific ways AI-driven marketing can lead to data exposure:

- Direct data exposure: It’s common for marketers to upload spreadsheets, customer lists, or CRM extracts into AI tools for analysis. Without safeguards, any sensitive information in those inputs can leak.

In practice, even a seemingly harmless act like summarizing sales call transcripts or campaign results in ChatGPT can result in confidential content being sent to a cloud model. That data could be stored, or even used to train future model outputs if enterprise policies aren’t in place or if a personal ChatGPT account was used, which keeps “training ON by default”.

In regulated industries (such as healthcare or financial services), even a single exposure of PHI or financial details can result in severe compliance violations. - Shadow AI usage: The biggest risk multiplier is the sheer number of AI tools being used outside of IT’s view. This “shadow AI” happens when teams adopt tools without informing security or IT - whether through personal accounts, new apps, or self-built workflows. IBM reports that about one in five organizations has already experienced a breach coming from these unsanctioned AI activities. Worse, incidents involving shadow AI tend to leak more PII: IBM found 65% of shadow-AI-related breaches exposed customer data, vs. 53% in an average breach.

In practice, shadow AI could mean anything from a marketer using a personal AI account on unencrypted Wi-Fi to an entire team building workflows on a low-code platform they set up themselves. If the company doesn’t know it’s happening, it can’t secure it. - Unsecured automation pipelines: Modern marketing often relies on integration platforms (Zapier, n8n, Clay, etc.) to connect tools and automate workflows. These are powerful efficiency boosters, but they can move data in unsafe ways. For example, a poorly scoped Zapier integration might pull data from the CRM and send it through multiple steps with overly broad permissions.

If endpoints aren’t locked down or API keys aren’t tightly scoped, an attacker (or a misconfigured process) could exfiltrate more data than intended.

For example, lead enrichment pipelines that call multiple APIs: if any of those endpoints are exposed to the internet without proper auth, a hacker could exploit them. Each additional integration creates a new link in the chain that needs to be secured.

Automation is great for productivity, but it also creates more pathways for data to slip through unnoticed unless security is factored in right from the start. - AI agents and knowledge bases: As enterprises build more advanced AI capabilities, marketers might deploy AI “agents” that connect directly to internal systems.

For example, an LLM reading from a Notion knowledge base, a custom chatbot tied to sales call recordings, or even LLMs embedded in CRM or collaboration tools. If these agents are given broad access, they can unintentionally push data they shouldn’t.

For example, an AI assistant that’s allowed to pull from all customer service tickets might leak private details if prompted the wrong way. Any AI agent’s access should be carefully and strictly scoped, and only specific documents or data segments, with strict read and write permissions, should be opened up. Without that, an autonomous agent could expose your data at any time. - Prompt injection and data exfiltration: Finally, AI introduces new attack techniques. Prompt injection is a menace as well.

A great example of this is the recent incident in which people used Chitpotle’s website chatbot to help them with coding and anything in between. Although that isn’t technically prompt injection since there were no guardrails in place around the type of responses that the chatbot should give, it simply highlights my point that anyone can play around with the prompts, and if they do so well enough, they can get these public-facing AI tools to share data they have no business sharing!

Most of these issues arise without any malicious intent. Most teams simply want to iterate faster. They’re not trying to leak data. They just haven’t taken the time to put guardrails in place.

AI Is Not the Enemy, It’s the Accelerator

It’s important to be clear: the solution isn’t to slam the brakes on AI use in marketing teams. That ship has sailed. Marketers should be using AI; it fundamentally transforms how they work.

AI-driven workflows offer significant business benefits such as faster market research, deeper customer segmentation, and personalized messaging at scale.

AI can help marketing teams be smarter and more productive. It enables fast idea generation, automates repetitive tasks, and surfaces insights that would take hours of manual analysis. Sales teams get better prep, campaigns get tighter targeting, and marketing can react to market shifts in near-real time. Those are real competitive advantages.

The point is that AI works. The problem isn’t AI as a technology but the uncontrolled way it’s usually adopted. The goal should be to let marketing move fast with AI safely. In other words, the objective is controlled acceleration.

Building Safe AI Workflows: Guardrails for Marketers

What does responsible AI use look like in practice? The same way we treat any powerful tool, with guardrails, oversight, and best practices.

Here are the key elements of a secure and agile marketing AI program:

- Enterprise-grade AI accounts: Require all AI work to happen through company-controlled tools, not personal accounts. For example, marketers should use the enterprise edition of ChatGPT with SSO login, rather than a personal login.

This ensures data handling policies can be enforced centrally. The approved enterprise tools can be configured not to train on your inputs, and they produce logs tied to your organization. By contrast, a personal account offers no oversight or control. Eliminate unsanctioned access: no personal AI logins for work. - Data discipline: Not all data is equal. Clearly define what can and cannot be fed into AI tools. Personally Identifiable Information, sensitive financial figures, or health data should be off-limits unless explicitly encrypted or anonymized. Use tools like Singulr to automatically detect and redact/block such prompts. For example, a prompt to an AI should automatically scrub customer names or credit card numbers before ever leaving your network. If your system flags a violation, it should either block the request or at least alert security. In practice, this means scanning what marketers input into AI UIs and what’s sent through automation pipelines. Solutions like Singulr can also enforce more fine-grained policies at runtime. These controls ensure that even if a marketer accidentally attempts something risky, the tool prevents it.

- Identity and access control: Apply the principle of least privilege everywhere. Marketers and the bots they deploy should have access only to the data and systems they need. For human users, it means standard IAM practices such as role-based permissions, single sign-on, MFA, and regular access reviews. But it also applies to AI integrations. If you grant an AI agent read access to the CRM, make it read-only. If you do allow ‘write actions’, put it in a sandbox environment first. For example, require that AI-driven emails or ad campaigns must have human approval before being sent.

- Secure automation design: Treat marketing automations like any other code or system. Validate all endpoints, use strong authentication (OAuth scopes, signed webhooks, etc.), and never pass raw sensitive data unless absolutely needed. For example, if an n8n workflow fetches customer names, consider whether it can hash or truncate the data instead of sending full records. Keep an eye on any automation that bridges between an internal database and the internet. Audit and log the data flows. Build your automations defensively. When building each step, the team should ask, “Is this exposing too much?”

- Governance for AI agents: Many marketing teams regularly experiment with AI agents. These agents should come with governance by design. That means explicitly limiting what internal systems they can query. For example, if an agent is connected to your email campaign tool and CRM, define in policy exactly which data fields it may read or write. Provide logs of every action it takes. And crucially, ensure that any “dangerous” actions (such as deleting data, sending emails, or modifying customer records) either require human confirmation or are reversible. The rule of thumb is “if an AI-triggered action can’t be undone, a human should sign off first.”

- Training and awareness: Even the best policies fail if marketing teams don’t understand them. Educate everyone on the why behind the rules. For example, explain how a single prompt could leak data or why using the enterprise ChatGPT matters for compliance. Share real-world examples (like how confidential sales data could accidentally train an external model). Building awareness makes it less likely that someone “accidentally” tries to optimize a campaign by dumping private data into an AI. Most of the time, misuse is unintentional. Clear policies, coupled with training, ensure that marketers see governance as enabling responsible innovation rather than as red tape.

In summary, “good” AI use in marketing looks like the usual blend of speed and safeguards. Everyone can still move fast, but with a net under them. Guardrails don’t have to slow innovation. Done right, they let teams experiment boldly without risking a data breach.

The Visibility Problem

Even with rules in place, there’s one big challenge: most organizations don’t actually know what’s happening with AI.

Leaders struggle to answer:

- Which AI tools are our people using?

- What data is flowing into them?

- Who is accessing what through these pipelines?

This lack of visibility means governance initiatives are often reactive. A policy might say “no PII in AI prompts,” but unless you can actually scan and log prompts centrally, how do you enforce it? Many companies only realize there was an issue after something goes wrong.

This is where a new approach is needed. To secure AI in marketing (and beyond), companies need tools that give unified visibility into all AI activity across the enterprise.

How Singulr Helps

Most organizations can write AI policies. The harder problem is proving those policies are actually enforced where work happens, across public AI tools, embedded copilots, internal models, and agentic workflows that move data between systems. That gap between governance intent and runtime reality is where preventable exposure occurs.

Singulr closes that control gap. It is the enterprise AI and agentic control plane that translates governance intent into runtime enforcement and continuously verifies that controls are performing as expected over time. This gives security and risk teams runtime certainty.

In practice, Singulr supports four outcomes that map to how AI operates inside real enterprises:

1) Enterprise-wide discovery and topology mapping

Singulr identifies AI services and AI-enabled features in use across the organization, including public AI applications, embedded AI in SaaS platforms, browser-based extensions, and internal AI services and agents. It also maps the relevant topology (where AI connects to data sources, endpoints, and downstream tools), so teams can see how a marketing workflow actually moves information across systems.

2) Runtime governance that becomes enforceable intent

Singulr turns high-level requirements into operational policies that can be applied consistently across tools and interactions. That includes defining approved services, restricting tool categories, applying geo-based constraints, and establishing ownership and accountability for AI services and agentic workflows. Governance becomes executable intent rather than static guidance.

3) Runtime control and real-time enforcement

Singulr enforces policies at execution time, where the risk actually occurs. It monitors AI interactions and applies enforcement actions such as blocking, restricting, step-up workflows, and sensitive data redaction. For marketing teams, this is especially relevant for file uploads, prompt content, and automation pipelines that might inadvertently carry PII, PHI, financial data, or confidential internal material into third-party tools.

4) Assurance and measurable control performance

Controls are often assumed to work because they are configured once. In reality, they drift as tools change, new features appear, and workflows evolve. Singulr measures enforcement reliability, identifies coverage gaps, and detects control drift over time. This creates defensible evidence that governance intent is being upheld, and it reduces preventable escalations that would otherwise become downstream security incidents.

The result is that marketing can keep moving quickly with AI, while security and risk teams gain consistent enforcement and audit-ready proof across the AI surface area. AI adoption stops being a collection of unmanaged exceptions and becomes an operationally controlled, continuously verified part of the enterprise environment.

Conclusion

Marketing today is a blend of art and engineering. It sits directly atop data and AI systems, so it must be treated as part of the security domain. The marketing function is no longer an isolated creative silo. It’s now a data-rich execution layer that reaches deeply into enterprise systems.

This means treating AI investments and data governance as inseparable. It’s giving marketing teams the power to experiment while keeping risk visible. The organizations that win will combine the speed of AI with visibility and control - keeping AI a competitive advantage and a powerful marketing multiplier, not a liability.

Frequently Asked Questions

1. Is Singulr designed to block AI use in marketing, or to enable it safely?

Singulr is a control plane. The goal is controlled acceleration: giving marketing teams the freedom to move fast with AI while ensuring that sensitive data, agentic workflows, and third-party tool integrations operate within enforceable boundaries. Security teams gain runtime certainty. Marketing teams keep their velocity.

2. How does Singulr address shadow AI in marketing environments specifically?

Shadow AI is a visibility problem before it is a security problem. Singulr's enterprise-wide discovery maps all AI services in use across the organization, including public AI applications, browser-based tools, embedded AI in SaaS platforms, and unsanctioned tools employees adopt without IT approval. Once you can see it, you can govern it. Singulr Runtime Governance™ then enforces approved service boundaries so that unauthorized tools are blocked at the point of use, not discovered after a breach.

3. Can Singulr enforce data protection within AI prompts and automation pipelines?

Yes. Singulr enforces policies at execution time, where exposure actually occurs. For marketing workflows, this means detecting and redacting PII, PHI, and sensitive financial data within prompts, file uploads, and automation pipelines before that content reaches third-party AI tools. Enforcement happens in real time, not on the next scan cycle.

4. How does Singulr handle AI agents that marketing teams deploy, such as CRM-connected assistants or lead enrichment workflows?

Agentic workflows require governance by design, not after the fact. Singulr maps the full dependency graph of every agent, including tool connections, data access, MCP server bindings, and permission chains. Singulr enforces policies based on agent type, data sensitivity, and tool access scope, and alerts on runtime drift when agent behavior deviates from the approved baseline. Unauthorized actions, such as accessing data fields outside the defined scope or making unapproved outbound calls, are blocked at execution time through Singulr Runtime Control™.

5. How does Singulr provide audit-ready proof that AI governance policies are actually being followed?

Most governance programs can produce a policy document. Few can prove enforcement. Singulr measures control effectiveness continuously, identifies coverage gaps, and detects drift as tools change and workflows evolve. The Singulr Assurance Layer provides independent, tamper-evident verification of AI control performance, giving compliance, risk, and security teams longitudinal proof that governance intent is being upheld across the full AI environment, not just at the point of initial configuration.

The Hidden AI Risk in Your Marketing Stack: Shadow AI, Automation Pipelines, and the Hidden Data Leaks

.png)